Amazing Brain Crew: Can AI design & conduct interviews for us?

Francesco Restifo

Senior Project Manager

Decode Future Scenarios

2023

Starting from a research brief, our AI chatbot conducts interviews, asks follow-up questions, reacts based on the answers, and analyzes gathered results

What appears to be simple is often the result of a long and careful design process. One that usually starts with Ethnographic research: a fancy name to indicate what User Experience experts do when designing a digital product. Activities aimed at understanding behaviors, needs, and struggles of potential users, which most times, means asking them directly.

Interviewing users requires careful preparation: writing a script (called protocol), contacting a representative set of people (large enough and well-balanced, usually called the panel), and an awful lot of calendar management. Interviews usually involve a note-taker and an interviewer, and all gathered information has to be analyzed to extract what’s really valuable.

In other words, it’s a lot of human work.

So, with all this buzz around AI, we asked ourselves: what can this seemingly miraculous, all-capable tech do for our researchers?

We set out to discover if we could build an automated tool capable of designing and conducting interviews — and if this wasn’t enough, we decided to analyze the results, too. Ambitious enough?

To approach all this, our UX researchers challenged computational linguists, AI experts, and coders to automate the entire process — without compromising on quality, of course.

The main topics to explore: in which stages of the research process does AI maximize its value? What would be the quality of the collected data? What role would the UX researcher play in this new scenario?

As always, our approach is to seek answers empirically, through prototyping, iteration, and experimentation. We needed a real-world research brief to automate the related research activities. We would ask an AI to execute the research, while performing the same tasks in the traditional, manual way as a control group, in order to compare results.

We picked a simple yet realistic topic: feedback on the workplace. We would ask interviewees to tell us about a recent instance when they were requested to share feedback with a colleague and delve into the emotional side of the experience. Episodes involving coworkers with different levels of seniority are particularly valuable, not because of any structural obstacles in communication or transparency, but for the additional emotional load this circumstance might add.

Before digging any deeper, let’s look at what the human research process looks like, to understand what we want AI to do for us. There are three main phases:

1. Setup: understanding the brief (i.e. the sought answers), defining interview protocols, assembling the panel;

2. Execution: organizing and conducting interviews;

3. Result analysis.

Can AI really do all this for us? Let’s analyze each phase.

Phase 1: Design

The goal: create an automated tool that understands the brief, writes the relevant interview script, and defines the characteristics of the panel.

Since we used ChatGPT, we were expecting it to ask us for more information if it didn’t understand something — and it did. In fact, during the first experiments, we realized that AI likes details. The same brief and context information given to a human would not suffice — it would keep asking questions, which felt weird at first, and rather tiring after a short while.

If AI mimics humans, it should learn by example — so why don’t we provide a real-world, human-written example to learn from? That’s what we did in the second iteration, gathering a couple of briefs, protocols, and panels for the AI to read, and explicitly instructing it to only ask for human validation once all outputs were ready.

This approach turned out to work much better.

Another thing we noticed: prompts are crucial. No wonder Prompt Engineering is becoming a rather lucrative business nowadays. You have to use the right wording to make it do what you expect.

For example, a well-formed panel usually includes the percentage or distribution of interviewees having certain desired characteristics (eg. age, gender, income, geography, etc). Although this level of detail was not key to us at this stage, it is required in many real-world scenarios. Think about approaching a recruiting agency or integrating survey tools like SurveyMonkey.

So once we got our results, we compared them with the protocol and panel the UX researchers on our team wrote without the help of AI, just as they would do on any real project. Although the generated ones were not perfect (questions mostly lacked focus on the human side of the interviewee’s experience), they were good enough to be used in real interviews.

Phase 2: Interviews

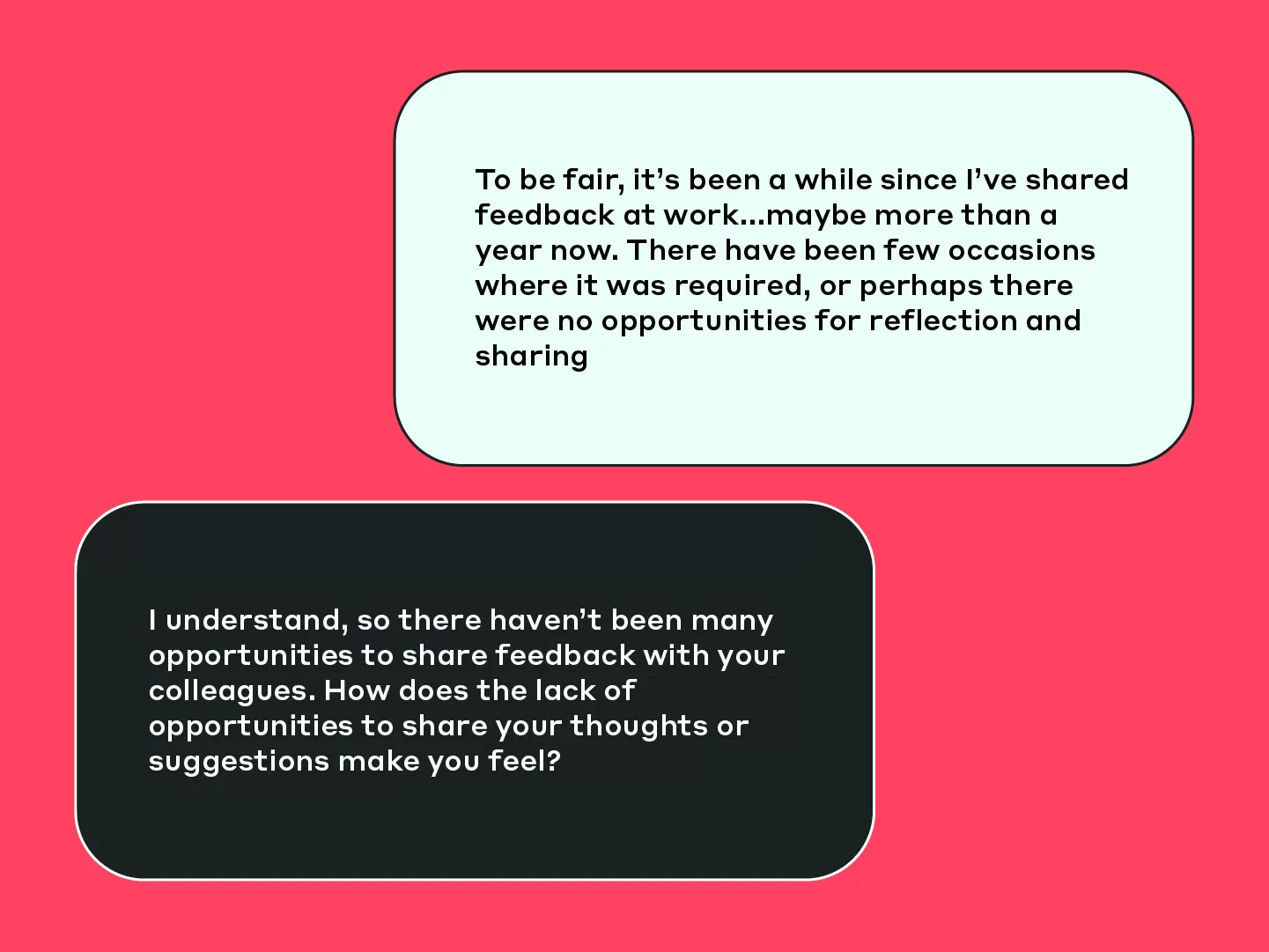

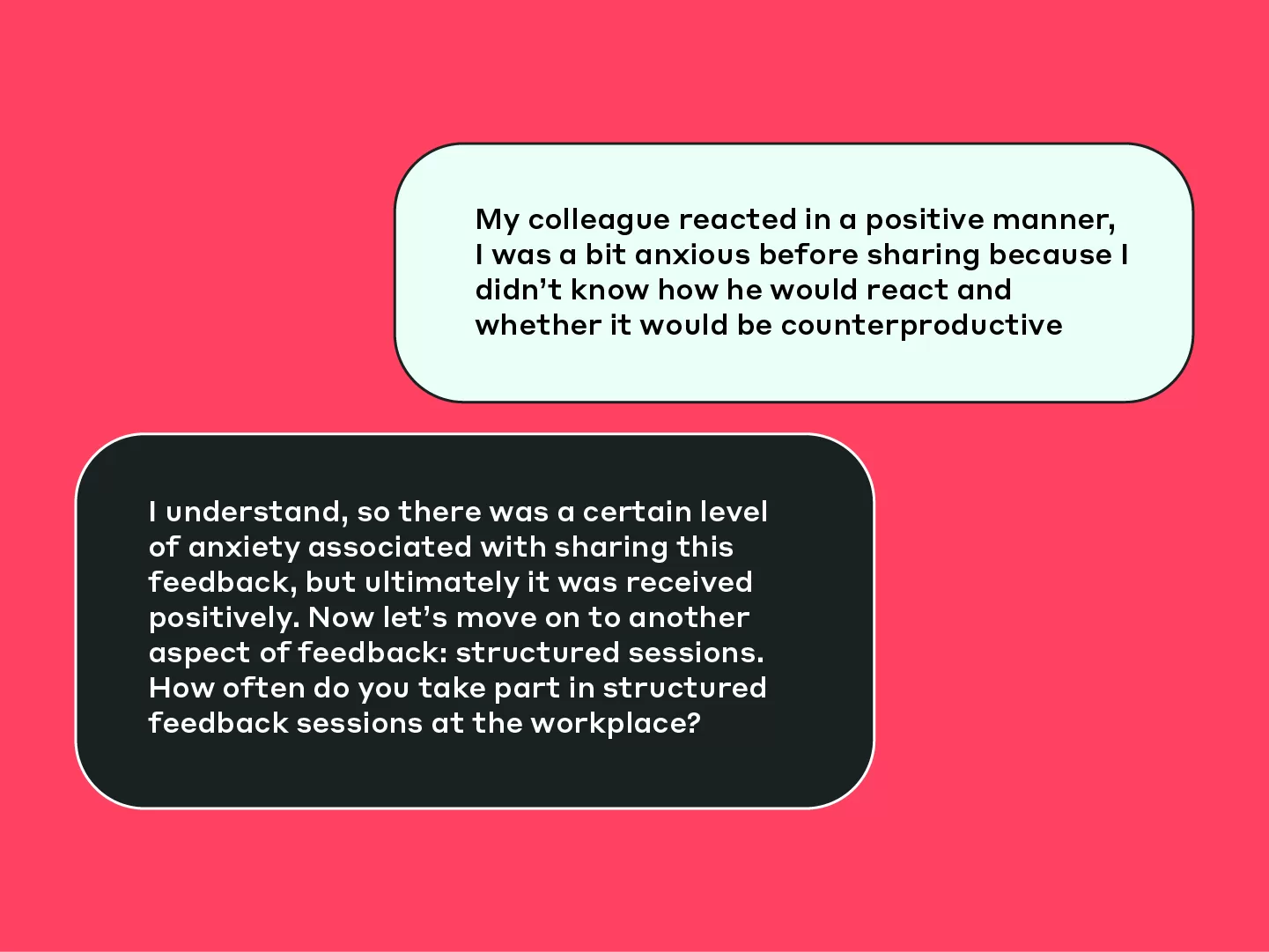

Showtime! While researchers conducted a small number of interviews themselves, the software team developed a friendly-looking webpage presenting a chatbot, which could conduct the interview autonomously. Of course, interviewees were sent the link to the page and asked to go through the process on their own schedule, within a deadline (one week).

What a convenience, you may think. Indeed, the advantages in time, efficiency, and effort saving are obvious. But even more important for us: what does it feel like to go through an interview with no human leading the conversation?

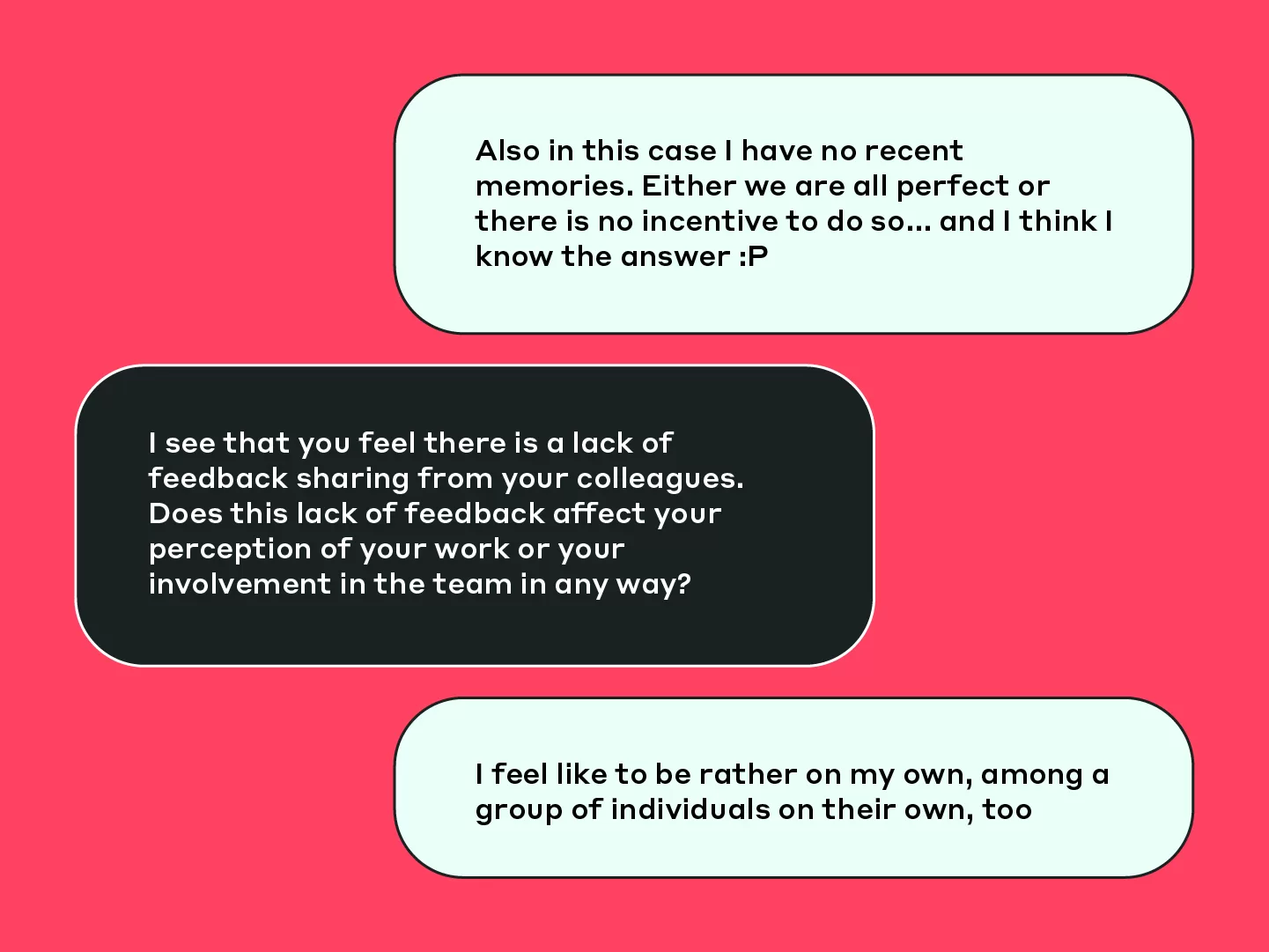

As the interviewees were our fellow MAIZE colleagues, we asked for feedback about their experience. They shared some pretty interesting insights: many comments addressed the repetitive style, tone, or wording of the AI’s queries. This is deeply human: we want to have a natural conversation experience, especially when we are asked to share our personal thoughts.

So we tuned the AI’s tone of voice, and instructed it to improve the structure of its messages (eg. by summarizing the interviewee’s point, asking for confirmation, and then sending the next, open-ended question).

The chatbot also asks follow-up questions, which contributes to the overall realism of the conversation and can even make you think there’s an actual person on the other end. We asked it to target its focus on the user’s emotional sphere. This all contributed to the overall realism of the conversation – sometimes it made people wonder if there was an actual person on the other end!

The overall interview process has been rated as positive. AI seems to have the ability to “read between the lines of what I was saying”, even if some details of the content were not sufficiently explored. We had 94,8% positive feedback from interviewees (73 out of 77). Here’s another example from the last message of an interviewee:

“Thanks to you, it was an interesting conversation and it also helped me reflect”

We also briefly explored a slightly different scenario.

What about if we already had an interview script available, and just lacked the manpower/time/budget to interview users ourselves?

To test this scenario, we provided AI with our humanly-written interview protocol, to find out that we got better answers with — what would you imagine here? Just pause for a few seconds: which one would work best in your opinion?

And the winner is… the AI’s own protocol! It turned out to work best during interviews. Surprised?

Now to the numbers. During the testing week, we counted 82 interviews started, of which 74 were successfully completed (i.e. users stayed on the page answering all questions), averaging 25 minutes duration each (more on the time aspect later).

Even ignoring the cost, this is something that our small team of 2 researchers would have never accomplished in that time. In fact, they managed 10 interviews in the same period.

Automation could go even further than this. We dared to imagine how AI could autonomously reach out to potential interviewees (given a list of names & emails), do a first round of interviews, analyze results, and improve the protocol based on how the answers covered the research question. Which all sounds not only fascinating but feasible too!

However, given it would have required a considerable additional team effort, we decided we’d better send emails out ourselves and skip the self-improvement bit for now.

At this point, we determined that AI could not only execute the Design phase but also interview a large number of people with its own protocol.

Phase 3: Result analysis

As the final step in the process, we asked AI to summarize the user’s responses and distill meaningful insights in a report. However, it should not only consider the literal meaning of what interviewees wrote in their chat messages. Context is key. Think about it: when talking to another person, as humans we ponder all sorts of contextual data to give meaning to words and phrases. Think age, working experience, seniority, our own past experiences with the person, and probably some biases, too.

We of course aim to eliminate biases, while still providing relevant data points as ingredients to the AI. So we instructed it to execute the following steps:

- summarize each single interview

- integrate each summary with contextual information

- create the final report, aggregating all individual insights, and drawing conclusions

This is a complex job to do for a human — how well could a machine perform? The first time around, the results were rather generic. There also were no citations or direct references to the users’ words, which is desirable as it minimizes rephrasing and re-interpretation of their thoughts.

We rewrote the prompt to include percentages and citations in the report.

Fun fact: the first produced citations were… made up. No slight rephrasing of real user sentences, but completely made up.

So we had to explicitly write to use actual content from interviews — which is something you would not have to specify to a human, of course!

So after some little tweaks here and there to the prompts, we had the AI cluster insights by seniority, which it did without issues.

This is all great, you may think, but what about the quality of the report?

Again, researchers in the team did the homework manually and compared their work with the results from the AI.

In the automatically generated report, we found about 70% of the information included in the manual report – perhaps because human interviewers added their own considerations or interpretations? Or maybe the AI did not evaluate missing points as useful? We do not know for sure: this needs further analysis, although we are sure this can be substantially improved by asking for a greater level of detail in the output.

Here’s where we had another powerful idea: in the future, we could extend the system and include the ability to have AI answer questions about the report — which would most likely allow researchers not only to close the gaps but explore gathered information even deeper.

What did we learn from all this?

For starters, our evidence shows how an in-person interview is a different experience compared to a chat conversation – even a very refined one. And I’m not even talking about the human factor, the empathy, the attentive listening of body language, or the occasional steam-releasing joke an experienced interviewer may squeeze in from time to time.

Feedback showed that the perception of passing time is different: in our case, a 20-minute chat conversation is perceived to be longer than a 45-minute interview. So a progress indicator, for instance, may be an easy and beneficial UX improvement.

The type of interaction (writing vs. voice) influences results. More structured information was gathered in writing, sometimes at the expense of content depth.

However, the written form appears to be more inclusive: introverted, reflective people do not feel pressured by the need to articulate their answers quickly and can dedicate time to gather the right words and ideas instead, making them feel more at ease.

Answering in writing is most likely perceived to be safer when dealing with sensitive topics. Although an automated bot might lack empathy in critical emotional high points, the privacy of one’s own time and space may influence the interviewee’s decision on which content they feel safe to share.

Is it good enough?

The answer is yes, if you know what you’re getting out of it. The output we got with our limited effort is already valuable – and there’s ample room for improvement.

However, there are some things to be aware of:

PROMPT DESIGN is, once again, the key to success.

HUMAN SUPERVISION is essential in all phases. For instance, in our initial tests, we discovered that ChatGPT “filled” the missing information (i.e. once again, it just made stuff up). There are ways to avoid this, but you have to be aware of the risk.

Is it worth it?

Again, the answer here is yes. With our limited investment (both in man days and in a minimal cloud infrastructure), we got a reusable tool that “only” needs new, well-written prompts to tackle in the next research brief.

At MAIZE, we see it potentially adding value to projects with the following features:

- medium-large number of interviewees, potentially multiple nationalities and languages involved

- challenging agenda management scenarios (full-time workers, different time zones, poor connectivity, etc.)

- simply, when budgetary constraints dictate to maximize efficiency

- when time is the biggest constraint

Should it replace humans entirely? Definitely not. This is a supervised tool, i.e. one that supports humans instead of providing a fully automated and complete workflow.

However, additional benefits could be obtained by rethinking the research process:

- use the tool before human interviews, to identify key assumptions or topics to be explored in depth with selected users,

- or even after traditional interviews to validate insights on a larger sample.

Beyond these considerations, think about a broader use of the underlying tech. When integrated into digital products, for instance, imagine triggering an in-context conversation on specific pages or user journey moments to request instant feedback.

The bottom line

Yes, it is possible to build an automated tool capable of designing and conducting interviews and analyzing results. But that’s not all there is to the story: more chapters are yet to be written. Voice? Tone and sentiment recognition? Interacting with images? There is still so much to explore and improve!

And yes, your job as a UX Researcher is safe… for now.