The World Wide Web utopia

Humanity should be the purpose of data and machines at work, but, today, that's not quite the case.

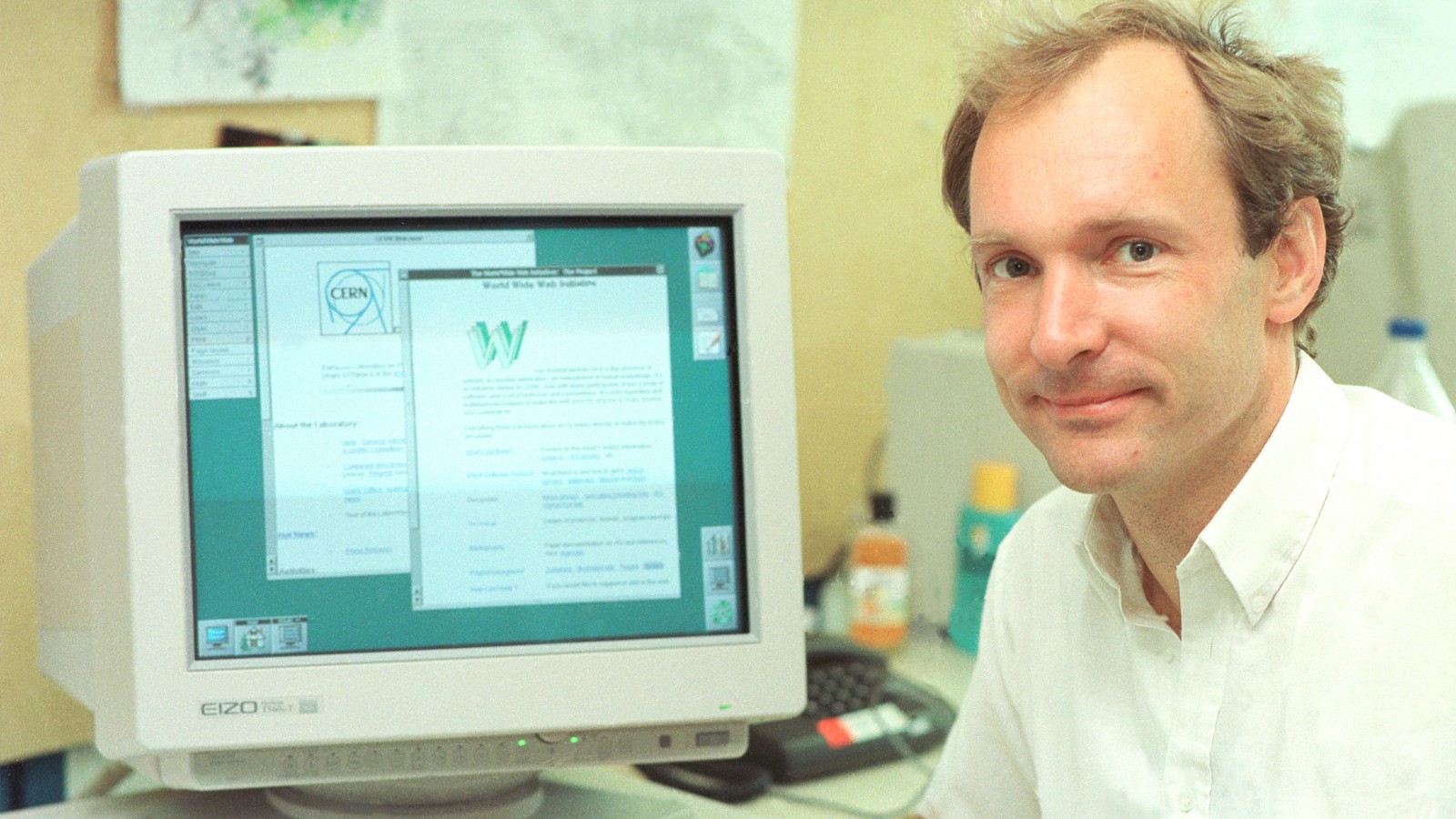

The World Wide Web was born in 1989 at CERN (European Organization for Nuclear Research), in Geneva, Switzerland, when Tim Berners-Lee proposed creating “a place where one could publish any information or reference which one felt was important, and a way of finding it afterwards.” A few years later, the Web was so big that a start-up company took the mission of organizing global knowledge. Today, any new information is linked to the Web and can be found by anyone, but many seem worried about how our data is held in proprietary silos that are out of sight. Data and algorithms are weaponized to colonize our attention, sell us increasing quantities of useless goods and subtly control our behaviors. We should not be so surprised: a tension remains that was identified in the 1980s by Stewart Brand: “Information wants to be expensive, because it’s so valuable. The right information in the right place just changes your life. On the other hand, information wants to be free, because the cost of getting it out is getting lower and lower all the time.”

Technological utopias are based on the myth of human empowerment through technique. Among the consequences of this enhancement, the end of work is often predicted, at least as a necessity (leading to Star Trek-style economies), while in dystopias this overcoming is translated into the setting aside of humanity (as in The Matrix). The utopia of data makes no exception to this founding myth. For example, within an economy where data has a growing role, productivity is enormously increased by technology. This doesn’t mean the end of work, but in the context of a capitalist economy it certainly makes it possible for wage and power gaps to grow between those who can and cannot master data — directly as data scientists, or indirectly, through their managerial skills.

In a fully realized data utopia, humanity should be the purpose of data (and machines) at work, but humanity treated as a means to data is not a dystopia reserved for science fiction scenarios à la Matrix. In The Matrix humans were used to produce heat and bioelectricity, while in reality they can do much more, like using their brains, annotating data, or delivering pizzas to other paying humans. Platforms such as Google or Facebook have therefore led to the most rapid realization of a part of the views reserved in the 1990s and 2000s to data utopists. But they also put a lot of people’s work at the service of data, to train algorithms that certainly work for people, but for which some people are a little more equal than others.

So, how do we tip the balance in favor of a data eutopia, instead of a data dystopia? Should we follow the approach of giving people ownership of their personal data? And, if we do, should we follow the “communitarian” strategy suggested by many of the participants in projects like DecodeProject.eu, or should we put online unpaid work and personal data transactions under a pure market logic? Or should we be more active using antitrust policies and similar market-compatible public policy tools?

We don’t know. Most likely a combination of the above. This debate is a lively one, and it involves a growing number of people. Actually, and despite their huge cost and potentially negative short-run economic impacts, regulations such as the European General Data Protection Regulation (GDPR) are another good sign toward a data utopia. Policy makers are ready to take on huge responsibilities in this domain, even if they impact big economic interests. And this is certainly good news.