Human vs Artificial

The human mind and artificial intelligence share similarities and differences we still don’t understand fully. What does the road ahead look like?

In conversation with Frank Pasquale by Francesca Alloatti

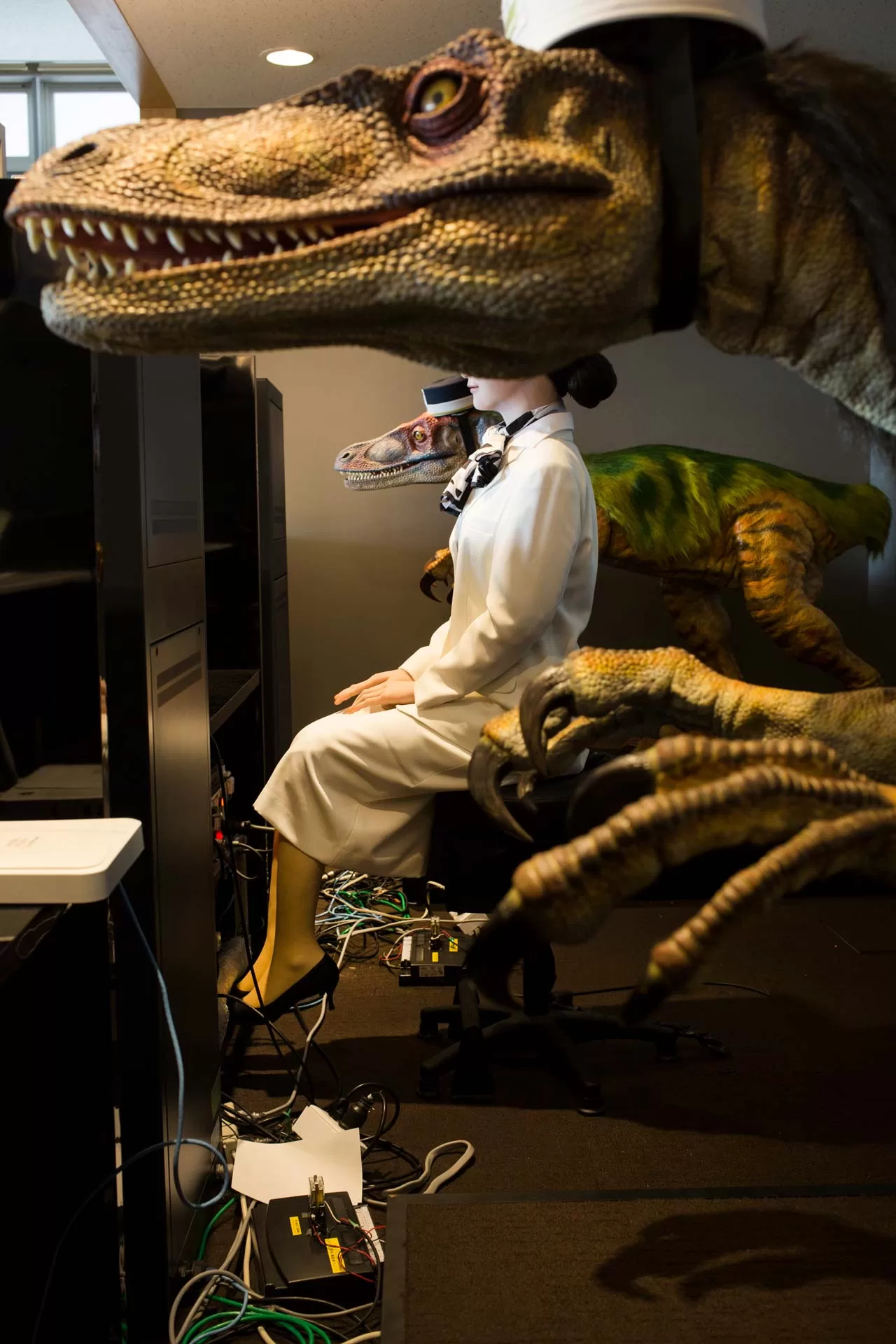

Photo by Alberto Giuliani. Telenoid robot in Tokyo, Japan: Telenoid, also created by Ishiguro, is a robot that recreates the emotional experience of a child. Its design is the result of decades of research on empathy between man and robot.

In recent years, the relationship between human beings and artificial intelligence has reached its maximum point of encounter and clash. AI threatens to replace our jobs. At the same time, it fascinates us when it creates imaginative — albeit at times disturbing — works of art, starting from simple inputs in natural language. In this scenario, it becomes increasingly important to ask ourselves what distinguishes a human being from artificial intelligence, how to live in harmony with it, and, above all, who is responsible for controlling its abilities and how it interferes with our daily lives.

Frank Pasquale has taught law at prestigious institutes such as the University of Maryland and Seton Hall University. He is now a professor at Brooklyn Law School in New York. In the 2000s, he started to take an interest in the intersection of law and technology, and in 2015 he published The Black Box Society. The book is a critique of the lack of clarity on what very large firms, such as Google, are doing with AI and algorithms. Over the past five years, he has been working on the New Laws of Robotics, which is a vision of how you create a more just and equitable tech sector. We spoke with him to understand where we are, but most importantly, where we are going.

In your latest book, you stress the importance of ensuring that an AI is not presenting itself as a human being, that it’s clearly stating its artificial nature. On the other hand, research has shown that humans tend to anthropomorphize any object they encounter, even when such an object clearly states, “I’m a machine.” What do you think is the right balance between our natural tendency to attribute anthropomorphic features to things so that we can establish a bond with them, and not being deceived by the intrinsically artificial nature of AI?

From a regulatory perspective, AI should not be deceiving. But you’re correct to say that there’s a great deal of projection and people want to think of AI as something more than it is. There could be a few different ways to break the spell: one is to shift from anthropomorphization to zoomorphization. To think of AI as an animal. And that’s a fun way to think about this sort of thing because when I read about people who become attached to their robot dogs, to me, that’s far less troubling or pathetic than someone saying, like, “this AI is my girlfriend (or human friend).” That’s one way of doing it; another way of reducing the stakes is a concept that Ryan Calo worked on, called “visceral notice.” His idea behind visceral notice was that if something is watching you, you’re officially notified. So, for example, if we’re on camera during a call, my computer has a green light on. It’s fascinating because that changed from being a red light to a green one, and that’s an interesting design choice: it makes people feel like “great, go ahead,” as opposed to “watch out, you’re on camera.” A third way is to repeat reminders. When people interact with Siri, they might ask a really personal question, and Siri would say, “I’m sorry, I can’t help you with that.” It may be helpful to remind them that they are basically interacting with a search engine equipped with a voice, and nothing more. So another strategy may be to add a statement of its (machine) identity to that “I’m sorry.” I think those are three options to try to break the spell.

Speaking of spells: many people, maybe because of their lack of expertise, have this sort of “magical thinking” about AI as opposed to a scientific one. The paradigm of informed consent has been challenged recently because people may not be aware of what they consent to. How important is the individual awareness of these topics compared to broad legal regulations that are supposed to protect everyone but do not seem to be very effective?

Legal regulation could be much more effective if proper resources were put into it. I wouldn’t give up on regulation, but I would also argue that a certain level of computational literacy, just as we have literacy for reading or math, is going to be really important: you want people to be able to understand what’s going on at a deep level, as opposed to just being wowed by the latest technology promises.

So, will literacy make us more aware of the artificial nature of AI and help us fight our natural tendency to anthropomorphize artifacts?

There will be different social groups with different attitudes that will bring up certain clashes. One of the reasons I love the movie Her — and I mention it in the last chapter of my book — is because it shows this potential clash. There’s this scene when the protagonist brings Samantha [the AI] to a gathering and says, “Meet my new girlfriend, Samantha.” There will be a lot of efforts from people who consider themselves “robot rights” activists and people who are transhumanists to defend that view. They will say things like, “If you don’t recognize his girlfriend, you’re anti-AI,” which to them it will mean that it’s like being anti-gay or anti-trans people. I think that’s totally wrong. It will actually be insulting to gay and trans people because it would assimilate their experience to that of a machine. It would be saying that their experience lacks anything close to a human experience or human feelings. I believe there will be a battle. The battle will be quite a political one, between those who insist upon this projection and those who speak the truth, which is that AI cannot experience true human reality. I realize that this statement may be controversial. Still, I believe it helps to understand that there will be political disagreements. One side will likely win. And it’s important to be aware of that. A future technology regulation may scramble current political orientations.

Photo by Alberto Giuliani. Humanoid robots in Japan: Hiroshi Ishiguro Laboratories at the Osaka University. Professor Hiroshi Ishiguro is considered the father of humanoids. Here he is posing with a robot — a copy of himself — which he used to give classes when he was busy.

Since you mentioned the concept of transhumanism: what about it? What are your views on it?

I’m very skeptical of it. I developed my critique back in 2002 in an article called Two Concepts of Mortality, and I have been thinking about this for ten years now. I will give it a backhanded compliment: on the one hand, I think it’s an important question. The idea that a person could be immortal by downloading their brain into a computer, and whether that person-self can be identified with their brain download or simulation: that’s a significant issue. It’s brilliantly staged in Neal Stephenson’s novel Fall; or, Dodge in Hell. It takes place in a future where people become entirely transfixed with the idea of brain download and the experience of whatever bot or AI or software gets developed that way. That software is experienced in a large, multi-user game where there are literally hundreds of millions of people interacting with hundreds of billions of bots based on people’s best brains.

On the other hand, to me, transhumanism has to be recognized as a religious position. It’s not a scientific position because it does not deal with a verifiable reality. It is rather about affective, metaphysical commitments to the idea that one’s real self is something that can be replicated computationally. And I think that runs up against other visions that ultimately are more robust and lead to a more just society: the ones that say that this is not a form of identity that really mirrors the self. Instead, it’s just one artifact of the soul.

It is often said that one of the jobs that AI will not replace is the hairdresser. Maybe it’s because being a hairdresser requires a range of technical skills that cannot be easily substituted. But I also think it has something to do with the bonding that we usually have with our hairdresser. What do you think about that?

There have been two waves of research on this. The first wave of research on this topic said: watch out, “robots are taking over jobs!” like in The Rise of the Robots, a book by Martin Ford, a position that Oxford researchers and McAfee also backed. The second wave instead focused on the many places where human interaction is part of the job. An excellent article by Leslie Wilcox said: “rather than worrying about doing too little work in the future, people will be worrying about infinite work.” The reason is that there might be all sorts of ways in which technology can create more duties, more opportunities for anyone who’s a professional. There are a lot of jobs where technology is going to give you more work, rather than less: for instance, a doctor will have to be aware of all sorts of new studies that exist about AI and medicine. You may need five people to assist the doctor just so that she understands everything that’s going on. It reminds me of something that I wanted to discuss in my book: at the Hanaa Hotel, in Japan, they had these robots at the front desk and a little robot in your room that was supposed to answer guests’ questions. Ultimately it all failed because the robots couldn’t keep up with all the different social interactions that one could have in a hotel. There are just too many random things that happen that you can’t anticipate. Perhaps, eventually, we will get to a robot hairdresser. But that may end up being a tier of service for people who are either cheap or have very little money. There will be robot hairdressers or human hairdressers in the future, and universal basic income only covers $2 a month for a haircut. So most people will have to go to a little automat, while the other people who are making more money can go to someone in person.

So, will some human skills become a sort of a premium?

I think that could happen. The people that are most skeptical of lawyers say: “in the future, going to a lawyer will be like getting a bespoke suit.” Something that only the wealthy can afford, the top five percent of the population. On the other hand, I’m struggling to think of an example where 90 percent of people would see a human for a service and 10 percent just a bot.

Do you think there are some human skills that we particularly need to defend due to the rise of AI? And if so, why are they under attack?

The skills one should cultivate need to be at the intersection of two things: what is hard to automate and what is a humanly enjoyable and fulfilling activity. The promise of AI is to take away from us the most tedious parts of our jobs and let us focus on what we actually do best, which includes interpersonal skills and high-level language skills. The ultimate irony would be that in a world where we’re supposed to worship STEM, the STEM skills are the ones that are more likely to be automated. I gave a talk at an accounting conference a few years ago in Denver. Many accountants worry that they’re just going to be automated away, but there are many forms of human judgment involved in accounting, and that human judgment, I think, is critical. To answer the question directly, the argument really is about judgment, a drawing of judgment in its fullest sense. Brian Cantwell Smith talks about artificial intelligence in his book, The Promise of Artificial Intelligence: Reckoning and Judgment. He says that the reckoning comes wherever there’s judgment, wherever there has to be some fusion of observation and normative evaluation. That’s where human skills are essential.

However, AI and automation are not necessarily the same, correct?

Yes, AI has a lot of different aspects. Beyond automation, there are forms of recognition or forms of interaction that AI deals with.

Photo by Alberto Giuliani. Humanoid robots in Japan: Henn-na Hotel in the Nagasaki Province. This hotel is managed exclusively by humanoids and robots.

What do you think AI will be like in the future? Will it be focused on the automation side to succeed in a context such as autonomous driving? Or is it just going to be an auxiliary system for human beings?

I think there will be only two paths for this: countries with a strong central government that wants to invest in making the necessary infrastructure for the cars be robust and reliable will get a fully automated driving system within 10 to 20 years. Places like Singapore or China, perhaps Japan and Taiwan. Only a few jurisdictions have such a strong state power to stop the human drivers, since they will become a source of unpredictable errors. There will be sacrifices made by the countries that have fully automated driving too. If you really want to make the system robust and resilient, you may well have to make the case that nobody can walk whenever they want, right? There’s an intersection in China where if people jaywalk, they are sprayed with water. That would be an excellent way to advance toward automation, training the pedestrians well! Whereas if that happened in any American city, I’m sure the mayor would be voted out immediately. There would be a huge rebellion. Potentially, different paths will also be taken based on relatively deep political and cultural responses to the assertions of state authority.

In most parts of the world, automated driving will work as incremental additions to what humans do. In both cases, automated driving still faces what Helen Nissenbaum and Wendy Ju called “the handoff problem”: that is when you need to have a human driver to take control, in case there’s snow, a dust storm, or some other sort of phenomenon that just makes it impossible for the computer to manage the road ahead. It’s one thing to drive, I know how to be a driver, and I also know how to be a passenger. But then, expecting people to pick on this third liminal role between passenger and driver is not easy at all: they would have to be ready to be called into action anytime. Some people are going to get to it, while others will not find it a very enjoyable experience and may want to just drive “as humans” like before.

Some people will be enthusiastic about the novelties brought up by AI. Others are going to be absolutely against it. What about the middle ground?

So much depends on politics. The great advantage of being in Europe is that the European Commission is a supranational governance mechanism at the right size and scale of authority that can engage in long-term thinking about the process of integrating AI into society. In the US, we entirely lack that. The Obama administration tried to deal with AI. It was a little late to the game but did robustly try to think about what it would do to the labor markets and tried to put some very basic guardrails on right. The Trump administration completely ignored that. The sole thought about AI was, “how do we make companies develop faster?”. There was no question of regulation. The Biden administration, by contrast, has a totally different approach. A better approach, I think, but different. So first it goes in one direction, then another direction… it’s chaotic. I feel that in the US, in the future one of the largest firms will essentially be able to impose their vision of the future of AI on US citizens in a monopolistic fashion. Whereas if I think of Europe, there will be more bargaining between public authorities. Small countries will be in a particularly difficult situation because they’re going to have to decide: they face societies that demand access to these technologies. And if they push too hard against their providers, they’ll upset a lot of mechanisms. Australia has been pretty scrappy in terms of pushing back against some of the tech giants. In the case of China, you have a very strong state which is able to assert a lot of power over the development of AI applications. In the broadest brushstrokes, the US presents a cyber-libertarian approach; China is a very top-down approach; Europe is trying to develop the best of both those worlds, possibly succeeding.

We are all familiar with the movie 2001: A Space Odyssey, the evil virtual assistant HAL 9000, and its deeds. Do you think that’s something that might actually happen? It used to be science fiction; is that still the case?

I think it’s an actual option. The most interesting question regards the “off” button, which, after all, is what Howell was arguing with Dave about. At the end of my book, I talk about the contemporary reimagining of the robot ADAM from McEwan’s book Machines Like Me. In the original version, the robot got out of control as soon as the owner became aware that if he tried to turn the robot off, it would kill it. It’s very easy to imagine that machines like police robots are being developed similarly to McEwan’s. Take, for instance, the history of robot dogs. Ironically, the first robot dogs were supposed to be these sort of cute cats, and they’ve evolved into killing machines. Or, at least, subduing machines. Their goal now is to subdue people. Material circulating online says that if one of these robot dogs attacks you, know that the battery pack is on what would be the stomach of a real dog, so that you can try to take the battery out. To me, the rule should be that if any of these devices get within three feet of a person, that person should be able to immediately and intuitively turn them off.

Another example is that of a firm that had something like a bot that was trading online, and they couldn’t stop it fast enough. It lost something like $450 million for them. I think that the premium placed on speed is what is going to lead to more out-of-control AI. And that’s when we really need to worry.