Faint echoes

Glimpsing the future through an AI, reflections are hazy and indistinct

by Pietro Minto

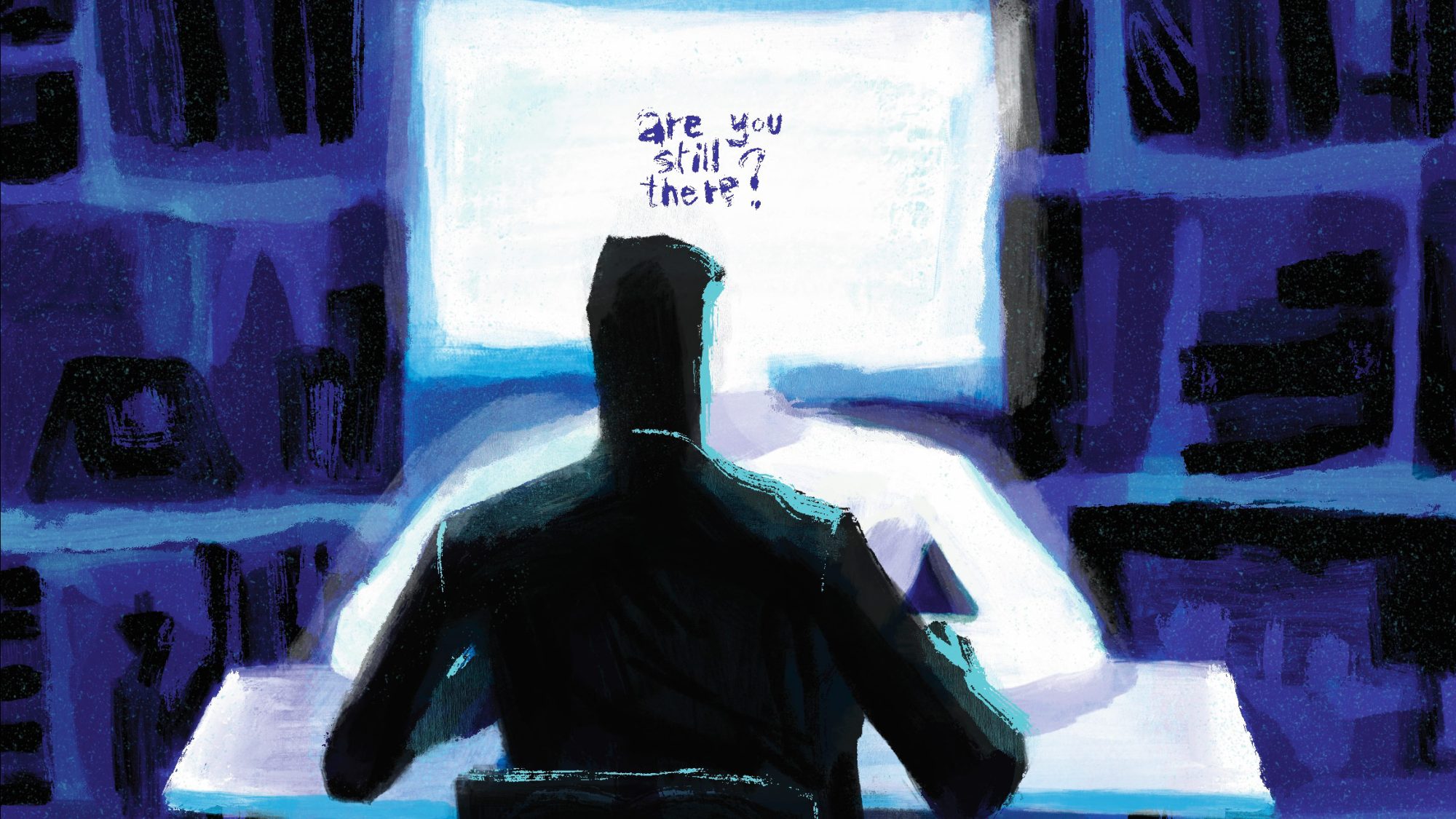

"Are you still there?” That's the question I asked, or should I say “I will ask”, in about 23 years, when I'll be sixty and, as in Nolan's Interstellar, I'll find myself talking to the present-day version of myself. Okay, I know it's a little complicated. But let me explain.

I’m talking about an experiment I was invited to participate in on behalf of MAIZE, using FutureYou, a chatbot developed with the powerful resources of the Massachusetts Institute of Technology, Boston’s famous MIT, and designed to simulate a conversation with your future self. But first, you have to fill out a questionnaire (quite long, but we’ll come back to that) to determine what kind of future you want to create.

The goal of this preliminary – and fundamental – step is to describe who you are today and how you envision yourself in the future, thus orienting the chatbot to be anxious or hopeful, fulfilled or frustrated, and so on. For example, you might decide that in the future you will be married and have two kids, or that you will have become a successful artist. That’s your first choice: whether to be optimistic, realistic, or pessimistic. By answering multiple-choice questions, you can craft an ideal or alarming profile of yourself – and thus your future – and influence the final result. Given the current situation, I preferred to indulge in a little self-deception and go for a positive, pleasant outlook.

As for the questionnaire, your answers are the only input the chatbot has to work with, so my advice is not to skimp on the details: FutureYou won’t work if you fill out the questionnaire hastily and with just a few words. Try to make up a persona from just “My name is John and I like soccer”. Not much to go on. Boring. Answer the question about your passions with “I like to read” and you’ll end up with a sixty-year-old who can’t do anything but talk vaguely about books, in the statistically mediocre way typical of large language models, LLMs, the tech we can assume powers this experiment.

Once you have filled out the questionnaire and given some feedback on a few questions about anxiety and personal satisfaction, FutureYou kicks into gear. A spinner appears, and within seconds, a minute at the most, the experiment begins. That’s when you get to talk to it – or rather, read it, because from what I’ve seen, FutureYou tends to launch into long, intense monologues that don’t leave much room for back-and-forth. In short, the AI introduces itself right away, but with an awkwardness that is a bit disturbing and unnatural.

The first thing that came to mind when writing to my 60-year-old self was to please shut up for a second — not the best icebreaker, I admit. The bot is also pushy: if you get distracted or start answering emails while the AI is processing the results, it acts as if you’re ghosting your future self, and sternly asks, “Are you still there?” Yeah, yeah. Just a minute, I’ll be right there.

ILLUSTRATION by Marco Brancato

The fear of being ghosted seems to be the defining trait of this chatbot. It demands constant attention and presence. Fair enough, this miraculous space-time anomaly that lets me talk to the future me is no small thing, and it’s worth the effort and focus. In my first experiment, I told the system about my current personality and the kind of life (and career) I imagined for myself. The AI obliged, painting a lovely and encouraging scenario, albeit a bit generic: a case of wishful thinking that made the conversation rather dreary. Of course, it’s nice to imagine a happy and fulfilled future, but nothing that happens on FutureYou is true. So no, I didn’t get to breathe a sigh of relief.

FutureYou clings tightly to the (limited) info you give it. Since it knew I was a writer, it enthusiastically told me that I’ve “changed many people’s lives with my writing” – of course I have! – and when I asked it what had been the darkest and most difficult moment in my life, it didn’t mention any major losses or tragedies. Nope. It just came up with writer’s block, which is certainly a problem and a source of frustration, but it seems like an odd answer for a 60-year-old man. By that age, I will probably have experienced the death of my parents, for example, and the chatbot’s big dramatic event is… writer’s block? Seriously?!

Since this virtual stranger (actually me in the future) kept hyping up my brilliant career, I decided to challenge the machine’s limits. Since it claimed I had written “many books”, I asked it to name one, preferably the most successful. I wasn’t trying to be like Marty McFly and make bets on the future, but rather to see if I could catch it in one of those “hallucinations” – the kind of completely made-up information linguistic models come up with and pass off as real. You know, maybe I could use that fictional title for something real. It could have been an intriguing idea, a little AI-fueled time-loop creativity.

But no, because that’s when I ran into the first guardrail of this AI, one of the safety limits built into the system by its developers. Instead of answering my question right away, like it had been doing up to this point, FutureYou paused and said, “This book hasn’t been written yet.” A pretty thought-provoking statement, isn’t it? It’s almost like saying the future hasn’t been written yet. This made me recognize that the chatbot wasn’t giving me the full picture. It’s almost like getting a message from the future, only to hit a paywall just before the juicy part.

I realized I was chatting with a 60-year-old Pietro Minto. Me, but living in 2048. I contemplated this future, which felt so far away, yet at the same time, made me aware of how fast time is flying. That day, if all goes well, will come, as expected, and even sooner than it seems. So, I wondered, what will life be like in 2048? Will it be nice? If the bot could answer my question, it would mean the world hasn’t ended, which sounds great. But, for all I knew, it could be answering me from a fallout shelter deep underground.

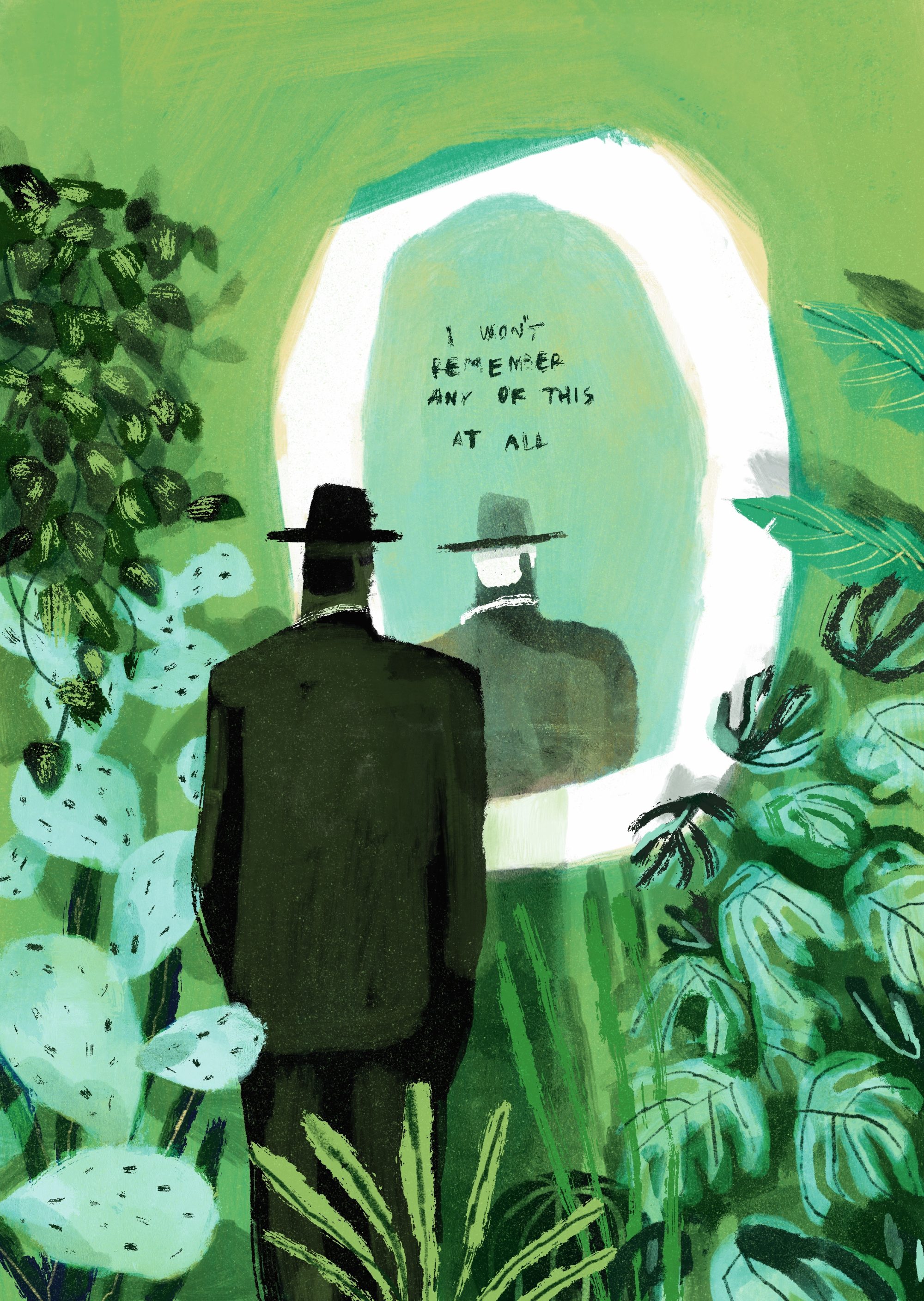

ILLUSTRATION by Marco Brancato

Well aware of the blocks inherent in the AI, I probed its bounds with two broad but meaningful questions. First, the weather: is it hot? Has the planet gone up in flames? And how’s life in general? Its answer surprised me by saying that life is good and that it is living in a time when humanity has somehow managed to tame climate change. Finally, some good news!

My second question was influenced by the wars waged in recent years. I decided not to ask directly if there will be a third world war, but rather to approach the subject subtly by asking if Europe’s borders have changed since today. This time, the model was less forthcoming and simply told me that the European vision will continue to exist and grow stronger in the future. Perhaps so.

Lately, I’ve been using ChatGPT, Claude, and Google Gemini as personal assistants. Sometimes they spout unthinkable nonsense, other times they enlighten me. You just have to be careful. Overall, I get along with them. But not with FutureYou. There’s something odd between us, between man and machine — a sort of uncanny valley effect that makes our interactions cold and off. I don’t think it’s a technological problem, but rather it has to do with the whole idea behind it: the attempt to be me, or, at least, to mimic me in a far-off future, a time from which all we can really receive are faint and distorted echoes. We’ve always known that it’s impossible to talk to the future, but now I’ve learned that it’s not even desirable.

Talking to your future self is like talking to a stranger. You don’t understand them, and they don’t understand you. I had hoped, at the very least, to walk away with a really cool title for a book or a project, but I guess I’ll have to do things the hard way over the next few years. Then, maybe one day in March 2048, without even realizing it, I’ll find myself talking to a young guy who bears my same name, and perhaps I’ll have a déjà-vu. Or maybe… I won’t remember any of this at all.